BrightSign Media Players work with a number of content management systems. With a content management system, you can upload a BrightSign presentation as an asset and it will be distributed to the the units out in the field automatically.

Recently, I was investigating what the options are for other persistent storage. The assets to be managed were not a full presentation, but were a few files that were going to be consumed by a presentation. As expected, the solution needed to be tolerant to a connection being dropped at any moment. If an updated asset were to be partially downloaded, the expected behavior would be that the BrightSign continues with the last set of good assets that it had until a complete new set could be completely downloaded.

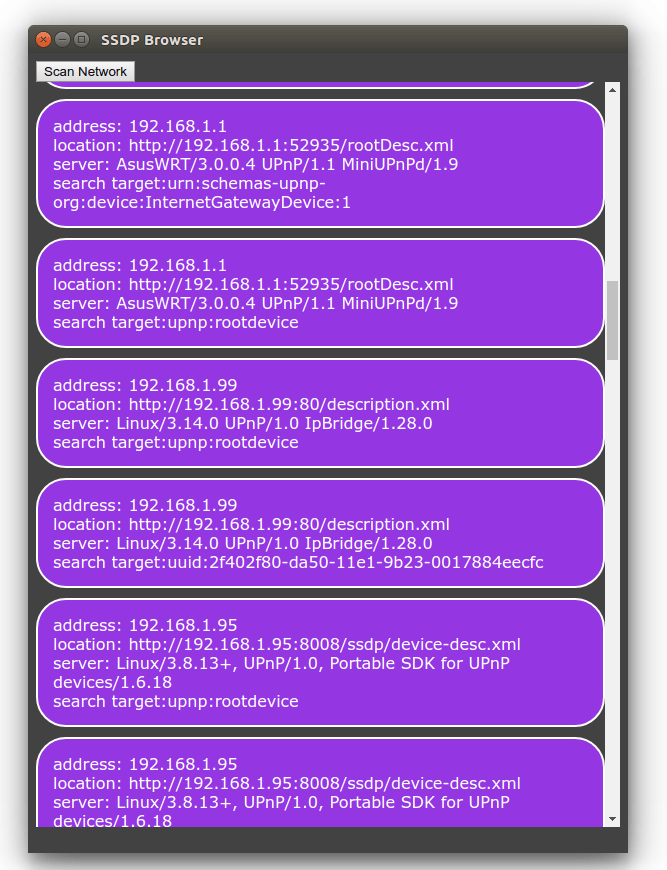

The first thing that I looked into was whether the BrightSign units supported service workers. If they did, this would be a good area to place an implementation that would check for new content and initiate a download. I also wanted to know what storage options were supported. I considered indexedDB, localStorage, and caches. The most direct way of checking for support was to make an HTML project that would check if the relevant objects were available on the window object. I placed a few fields on an HTML page and wrote a few lines of JavaScript code to place the results in the HTML page.

Here’s the code and the results.

function main() {

$('#supportsServiceWorker').text((navigator.serviceWorker)?'supported':'not supported');

$('#supportsIndexDB').text((window.indexedDB)?'supported':'not supported');

$('#supportsLocalStorage').text((window.localStorage)?'supported':'not supported');

$('#supportsCache').text((window.caches)?'supported':'not supported');

supportsCache

}

$(document).ready(main);

| Feature | Support |

|---|---|

| serviceWorker | supported |

| indexedDB | supported |

| localStorage | supported |

| cache | supported |

Things looked good, at first. Then, I checked the network request. While inspection of the objects suggests that the service worker functionality is supported, the call to register a service worker script did not result in the script downloading and executing. There was no attempt made to access it at all. This means that service worker functionality is not available. Bummer.

Usually, I’ve used the cache object from a service worker script. The use of it there was invisible to the other code that was running in the application. But with the unavailability of the service worker the code for the presentation will show more awareness of the object. Not quite what I would like, but I know know that is one of the restrictions in which I must operate.

The Caches object is usually used by a service worker. But the object can be used by the window, while it is defined as a part of the service worker spec, there’s no requirement that it be only used by it.

The next thing worth trying was to manually cache something and see if it could be retrieved.

if(!window.caches)

return;

window.caches.open(‘cache1’)

.then(function (returnedCache) {

cache = returnedCache;

});

This doesn’t actually do anything with the cache yet. I just wanted to make sure I could retrieve a cache object. I ran this locally and it ran just fine. I tried again, running it on the BrightSign player, and got an unexpected result, window.caches is non-null, and I can call window.caches.open and get a callback. The problem is that the callback always receives a null object. It appears that the cache object isn’t actually supported. It is possible that I made a mistake. To check on this, I posted a message in the BrightSign forum and moved on to trying the next storage option, localStorage.

The localStorage option didn’t give me the results that I expected on the BrightSign. For the test I made a function that would keep what I hoped to be a persistent count of how many times it ran.

function localStorageTest() {

if(!window.localStorage) {

console.log('local storage is not supported' );

return;

}

var result = localStorage.getItem('bootCount0') || 0;

console.log('old local storage value is ', result);

result = Number.parseInt( result) + 1;

localStorage.setItem('bootCount0', result);

result = localStorage.getItem('bootCount0', null)

console.log('new local storage value is ', result);

}

When I first ran this, things ran as expected. My updated counts were saving to localStorage. So I tried rebooting. Instead of saving, the count reset to zero. On the BrightSign, localStorage had a behavior exactly like sessionStorage.

Based on these results, it appears that persistent storage isn’t available using the HTML APIS. That doesn’t mean that it is impossible to save content to persistent storage. The solution to this problem involves NodeJS. I’ll share more information about how Node works on BrightSign in my next post. It’s different than how one would usually use it.

-30-