I was thinking about a video game from my childhood and how easy it would be to rebuild the game now. Having decided to do this I had to decide what platform to target. I chose to make it a browser application. While the game could be played with a keyboard or mouse I wanted to use a game pad to play it the way it was meant to be played.

Game pads can be used in HTML. I’ve not seen many applications that actually take advantage of this, but as game streaming becomes more popular this is likely to change. Let’s start with one of the simplest things we can do: detect what game pads are present. I’m going to share all of my code in TypeScript. TypeScript is basically JavaScript with type information added. It compiles down to JavaScript. The advantage of using it here is that you will know the data types of a variable or parameter instead of needing to analyze the code and make inferences.

Detecting a Game Pad

To start we want to ensure that our browser supports the game pad API. To do this just check whether or not the getGamepads function exist on the navigator object.

var isGamepadSupported:boolean = (navigator.getGamepads != null);

There are two ways that we can detect the presence of game pads. We can listen for the events that are fired when a controller is connected or disconnected, or we can just poll for their presence. Here’s a code sample that does just that. The TypeScript elements are highlighted in blue.

function handleGamePadConnected(e:GamepadEvent) {

console.log('A game pad was connected', e);

}

function handleGamePadDisconnected(e:GamepadEvent) {

console.log('A game pad was disconnected', e);

}

window.addEventListener("gamepadconnected", handleGamePadConnected);

window.addEventListener("gamepaddisconnected", handleGamePadDisconnected);

If we run this code within a page we can see the code get triggered by ensuring the browser has focus (a browser without focus will not receive game pad events). Connecting and disconnecting a controller (if USB) or turning a controller on and off (for Bluetooth) will cause the event to trigger. On some controllers you may have to press a button for it to fully connect. The event passed to the handlers has a field named game pad that contains all of the data for the game pad being used. The other way to get this information is to try to read the game pad state Calling navigator.getGamepads() will return information on all the game pads connected to the system. A word of warning though, the value returned is an array that has some null elements in it. If you iterate through the array don’t assume that because you are reading a valid index that there is actually an object there.

This might sound weird at first, but imagine you are playing a four player game and player 2 decides to turn off his controller and leave. Rather than assign a new index to players 3 and 4 their controller indices will stay the same and the second element in the array will be null. To get the state of all of the game pads attached to the system use the following.

var controllerStates:Array<Gamepad> = navigator.getGamepads();

When I call this method I always receive an array with up to 4 elements in it, most of which are null. If this function is being called in the game loop from one call to another one can see what controllers are present . Keep in mind that the object returned here is the state of the game pad, but it is not a reference to the game pad itself. If you hold onto the object it will not update as the state of the controller changes.

There are two properties on the GamePad object of most interest to us; the buttons property has the state of the buttons and the axes property contains the state of each axis for each directional control on the game pad.

Axes

A D-pad input on a controller has two axes that can range from -1 to 1. There will be a vertical axis with three possible values and a horizontal axis with three possible values. While one might expect the neutral position of the controller to be zero I’ve found that even with my digital controllers the neutral value is near-zero but not quite zero. There is a range for which we could receive a non-zero value that we would need to treat as zero.

For the analog sticks the general range of values returned will also be between -1 and 1 for each axis, but there are values between this range that could also be returned depending on how far the directional stick has been used. When the stick isn’t being touched the axis will return a value that is near zero. Like the digital input there is a near zero range that should be treated as zero. Note that the controller interface may return more axes than actually exists on the controller.

Buttons

The buttons element of a GamePad object has a list of buttons. The collection may return more objects than there are physical buttons on the controller; these non-existing buttons will not change state. The GameButton Interface has three attributes.

- pressed – will be true if the button is pressed, false otherwise.

- touched – for controllers that can detect touch this attribute will be true if the button is touched, false otherwise

- value – a value between 0 and 1 that tells how far the button is pressed or how hard it is pressed.

Even if you have a controller that doesn’t support touch or analog input the touched and values buttons will still update. If a button is pressed it can be inferred that it is being touched and the touched attribute updates accordingly. For a digital button the value attribute will only be 0 or 1 and no value between.

interface GamepadButton {

readonly pressed:boolean;

readonly touched:button;

readonly value:number;

}

Other Attributes

There are some other attributes that can be found on the game pad that I haven’t discussed here. There GamePad object has an index attribute that will identify its own index in the array. There is a string id field that gives a name identifying the controller. There is also a timestamp attribute that indicates the time at which a Gamepad object was grabbed.

interface GamePad {

readonly id:String;

readonly index:number;

readonly connected:boolean;

readonly timestamp:long;

readonly mapping:GamepadMappingType;

readonly axes:Array<number>;

readonly buttons:Array<GamepadButton>;

}

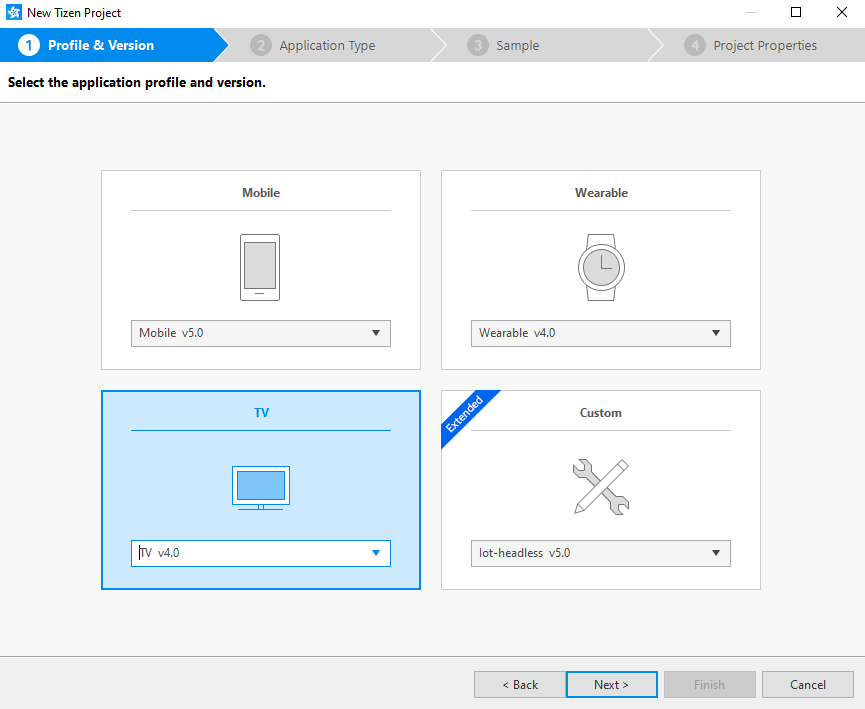

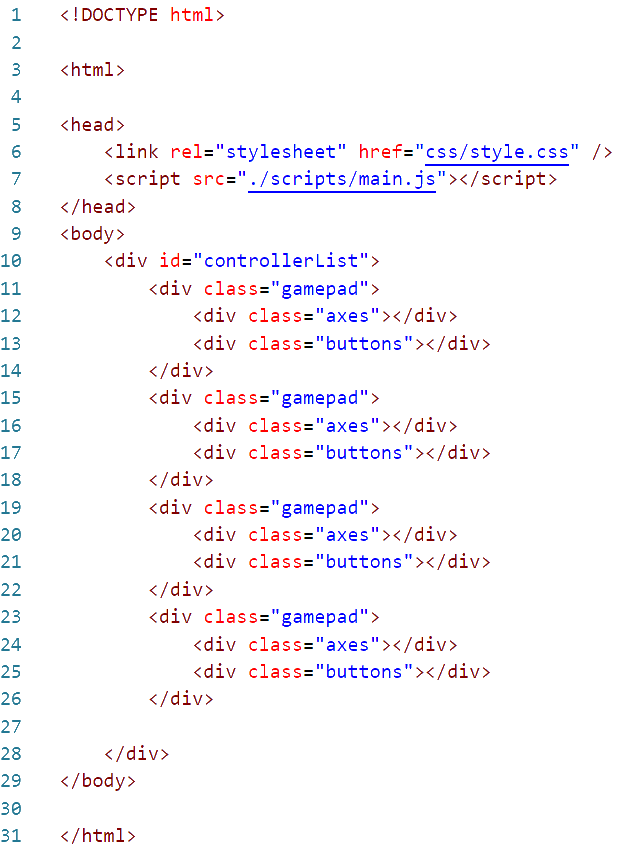

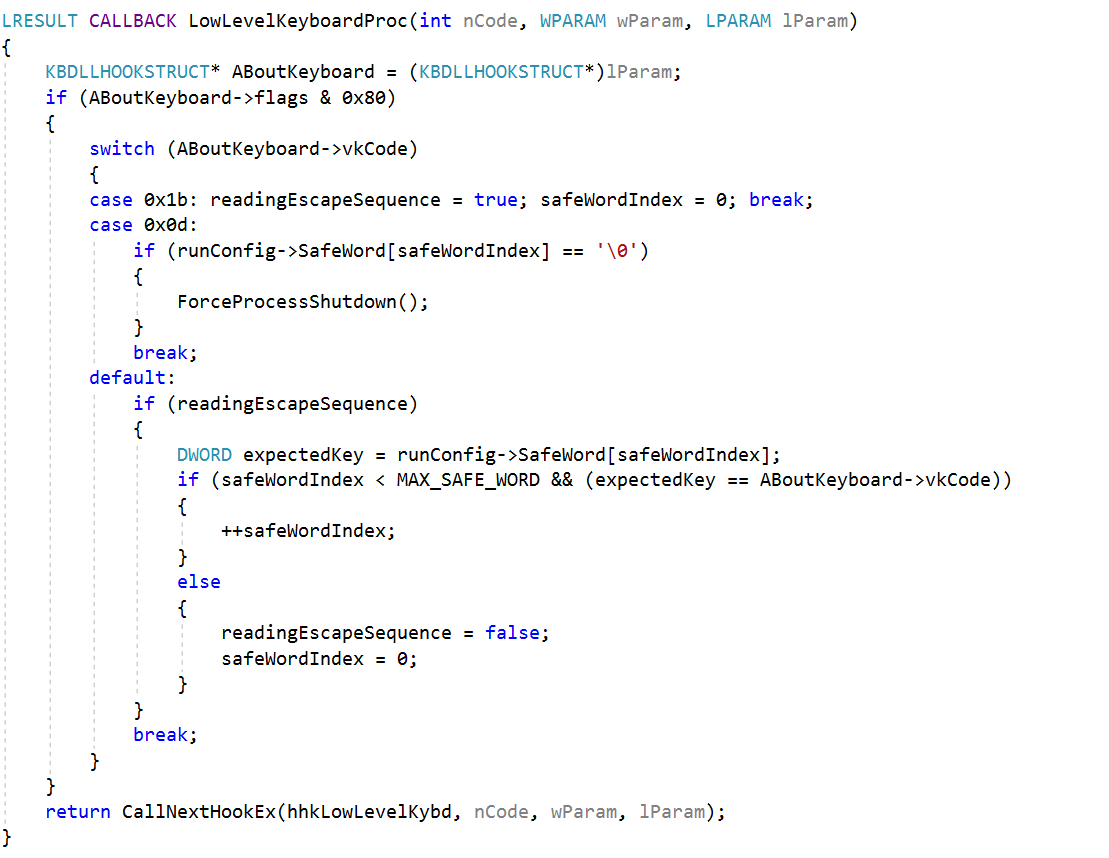

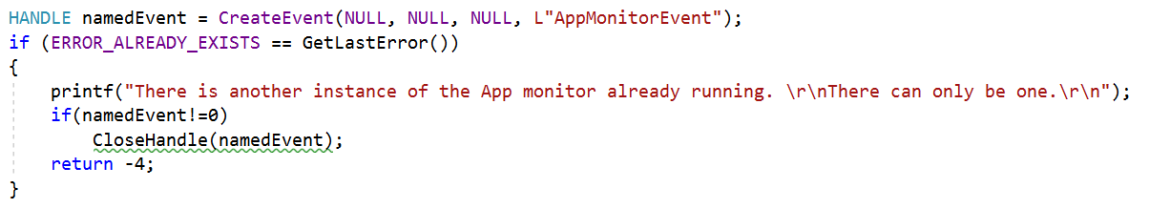

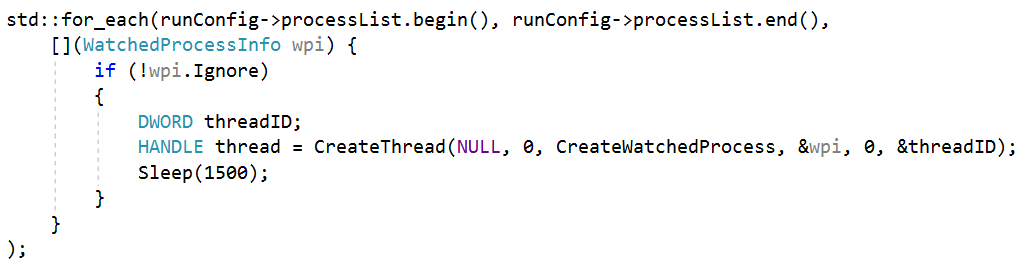

Code Sample

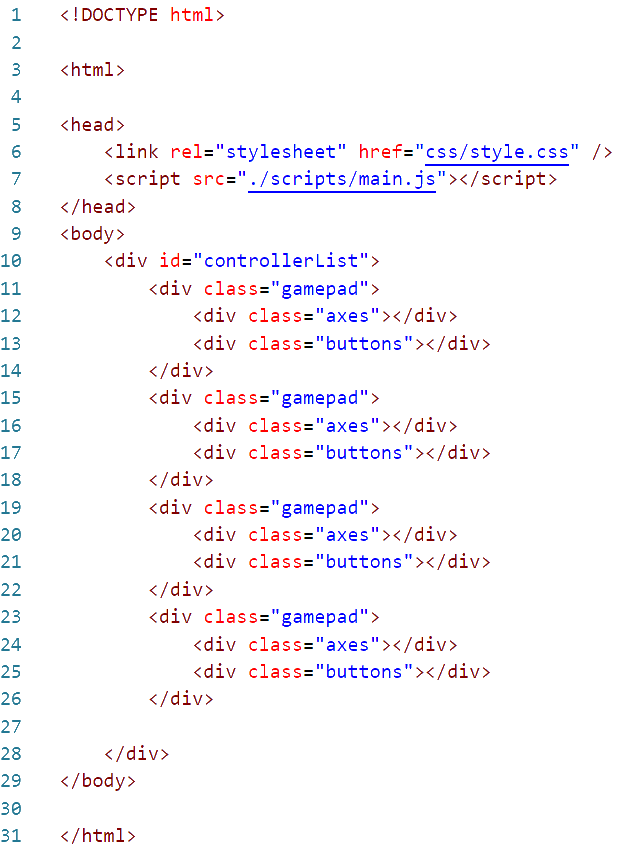

This code sample here will read the states of up to 4 controllers and show their states on the screen. I’m using images to present the code here as I have found that WordPress will sometimes unescape HTML code and render it as HTML instead of text. But you download the code sample directly to view it.

The file main.js here was compiled from a typescript file, main.ts. Execution in mail.ts starts after the document is loaded. The first method executed is named start. It adds some handlers for the game pad being connected or disconnected. These handlers only print the event out to the console. We are more interested in what is running within the interval. In the interval the states of all of the game pads is retrieved and then we call a function (updateController) to display them on the screen.

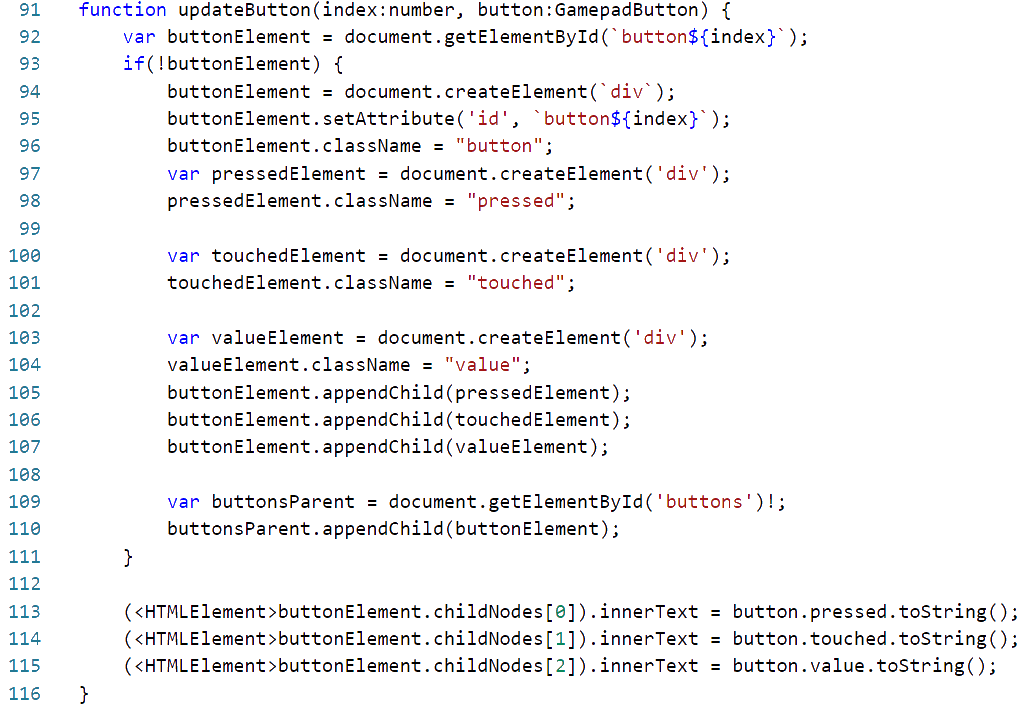

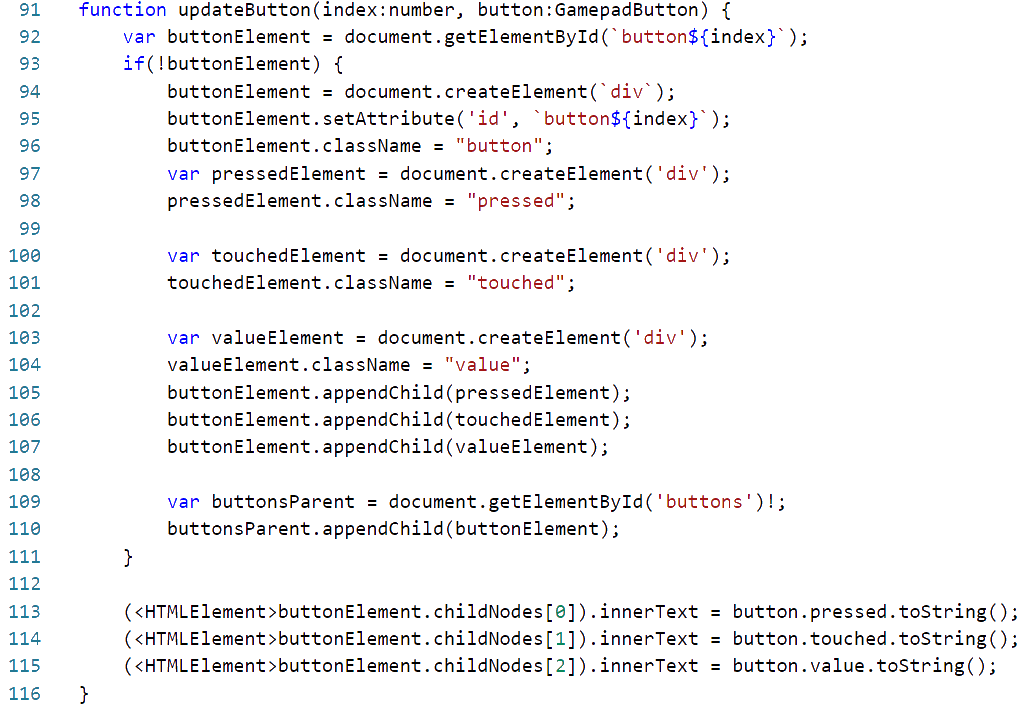

Update Controller will find the HTML block to which a specific controller index is assigned and get the represented button state and axes states updated. The functions updateAxes and updateButtons take care of the details of these. There are several other HTML elements needed for this that were not declared in the page. Instead they are being created as they are needed.

In updateAxes if the needed element doesn’t exists I create it and then I show the value of the axis.

The function updateButtons does pretty much the same thing. Only instead of a single element it is updating three.

Do you have a game controller and want to try things out? I’ve got it loaded on a web page that you can try. Get your computer and controller paired. Then see the demo run at https://j2i.net/apps/gamePadStatus/